Three workflow patterns every AI agent needs

Gartner predicts over 40% of agentic AI projects will be canceled by 2027 without structured processes. AI agents need sequential, parallel, and evaluation-loop workflow patterns that both Anthropic and AWS agree on as fundamentals.

The agent can think on its feet but has no idea where to walk. Here’s how we approach workflow automation at Tallyfy.

Workflow Automation Software Made Easy & Simple

Summary

- AI agents without workflow patterns are expensive chatbots - Gartner predicts over 40% of agentic AI projects will be canceled by end of 2027, largely because they lack structured processes

- Three patterns cover most real work - Sequential (steps in order), parallel (steps at the same time), and evaluation loops (check quality, retry if needed) handle the overwhelming majority of production agent use cases

- Anthropic and AWS agree on these fundamentals - Both Anthropic’s agent guide and AWS prescriptive guidance converge on these same three patterns as the building blocks

- Process definition is the prerequisite, not an afterthought - AI amplifies whatever process it follows, so a broken process automated by AI just breaks faster. Start with the workflow

I’ve watched this movie before. New technology arrives. Everyone rushes to adopt it. Most implementations fail. Then the survivors figure out what the failures missed.

With AI agents, the thing they’re missing is embarrassingly simple. Workflow patterns.

Not “AI strategy.” Not “digital transformation roadmaps.” Just: what steps does this agent follow, in what order, and what happens when something goes wrong?

Anthropic published their guide on building effective agents and buried the lede in one sentence: “The most successful implementations weren’t using complex frameworks or specialized libraries. Instead, they were building with simple, composable patterns.” That’s it. Simple patterns. Three of them handle nearly everything you’d want an AI agent to do in a business context.

Let me walk through each one.

The sequential pattern

This is the one everyone understands intuitively. Step A finishes. Step B starts. Step C waits for B. Like a recipe — you don’t frost a cake before you bake it.

Agent A takes an input, processes Step 1, passes the result to Step 2, then Step 3, and out comes your result. Each step depends on the one before it. No skipping. No shortcuts.

Why does this matter for AI agents specifically? Because most business processes are sequential. Employee onboarding. Invoice approval. Contract review. Insurance claims. These aren’t parallel operations — they’re chains of dependent actions where each step needs the previous step’s output.

Here’s a real example. An AI agent handling invoice approval might work like this:

- Extract invoice data from the PDF (vendor, amount, line items, due date)

- Match the invoice against the purchase order in your ERP

- Flag any discrepancies between the invoice and the PO

- Route to the appropriate approver based on amount thresholds

- Record the approval decision and update the accounting system

Each step feeds the next. The agent can’t route to an approver without first knowing the amount. It can’t flag discrepancies without matching against the PO. Sequential.

The pattern we keep running into with workflow automation, this is where 70% of business processes live. They’re linear. Predictable. And they’re exactly the kind of work that AI agents handle well — as long as someone defined the sequence clearly.

The trap I see constantly? Companies trying to make everything parallel or “intelligent” when sequential would work fine. Andrew Ng made this point when he described agentic workflow patterns: start simple, add complexity only when you can measure the improvement.

At Tallyfy, we built the product around this reality. Most workflows are sequential. That’s not a limitation — it’s the natural structure of how work moves between people. An AI agent that follows a well-defined sequence will outperform a “smart” agent freestyling every time.

The parallel pattern

Now things get interesting.

Some work genuinely can happen at the same time. When it can, making an agent wait in line is wasteful. The parallel pattern sends multiple steps out simultaneously and merges the results when they’re all done.

Agent A takes an input, kicks off Step 1, Step 2, and Step 3 all at once, waits for all of them to finish, merges the outputs, and produces a result.

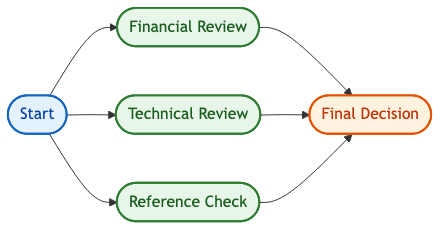

Think about vendor evaluation. You need financial due diligence, technical capability assessment, and reference checks. None of these depends on the others. Running them sequentially means your evaluation takes three times longer than it needs to.

Or multi-department approvals. Legal, finance, and compliance all need to sign off on a contract. They don’t need to go in any particular order. Send the contract to all three simultaneously, collect their responses, merge the results.

AWS describes this pattern as “sectioning” — splitting a task into independent subtasks that run concurrently. Anthropic calls it “parallelization.” Same idea, different name.

The business value is obvious: speed. But there’s a subtlety that trips people up.

Parallel doesn’t mean uncoordinated. You still need:

- A clear definition of what each parallel branch does

- A merge point that knows how to combine the results

- Error handling for when one branch fails but the others succeed

- Timeout logic so one slow branch doesn’t block everything

I’ve seen companies try to parallelize everything and end up with chaos. Three departments all doing overlapping work because nobody defined the boundaries. The agent doesn’t know whose output to trust when they conflict.

Feedback we’ve received suggests the biggest mistake is parallelizing steps that have hidden dependencies. “Sure, legal and finance can review simultaneously” — until you realize finance’s review changes based on legal’s risk assessment. Then you’ve got a parallel pattern that should have been sequential, and you’re merging contradictory results.

The rule of thumb? If removing Step 2’s output wouldn’t change how you handle Step 1’s output, they can run in parallel. If it would, they can’t.

This is exactly what we built at Tallyfy. You define which steps depend on each other and which can run concurrently. The AI agent — connected through MCP — respects those dependencies automatically. It doesn’t guess. It follows the process you defined.

The evaluation loop

This is the pattern most people don’t think about. And it’s the one that separates toy demos from production systems.

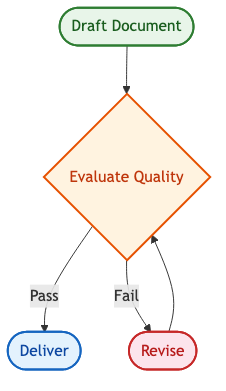

An evaluation loop means the agent doesn’t just execute steps — it checks its own work. After each step (or after a critical step), a separate evaluation process examines the output. Pass? Move on. Fail? Retry, revise, or escalate to a human.

Agent A completes Step 1, then an evaluator checks the output. If it passes, proceed to Step 2. If it fails, retry Step 1 with adjusted parameters, or kick it to a human for review.

Anthropic specifically calls out this pattern as the “evaluator-optimizer workflow” — one LLM generates a response, another evaluates it, and the loop continues until quality meets the bar. They say the two signs it’ll work are: first, that human feedback demonstrably improves the output; and second, that an LLM can provide that same kind of feedback.

This drives me crazy about most AI agent implementations. They run the steps and assume the output is correct. No verification. No quality gate. Just blind trust in the model’s output.

In compliance checking, that’s a disaster waiting to happen. Imagine an AI agent reviewing loan applications for regulatory compliance. It extracts applicant data, checks it against regulations, and produces a compliance report. Without an evaluation loop, a hallucinated regulation or a misread data field sails through uncorrected.

With an evaluation loop:

- Agent extracts applicant data from the submission

- Evaluate: Is the extraction complete? Are all required fields present? If not, flag what’s missing and re-extract

- Agent checks extracted data against compliance rules

- Evaluate: Does the compliance assessment reference real, current regulations? Does the logic chain hold up? If the evaluator finds a gap, the agent revises before proceeding

- Agent generates the compliance report

- Evaluate: Final quality check — is the report internally consistent? Does it match the source data?

Each evaluation is a checkpoint. A gate. A moment where the system asks itself: “Am I confident this is right?”

AWS published detailed guidance on implementing this pattern. Their architecture uses a generator agent and an evaluator agent working in a loop — the evaluator checks coverage, tone, and correctness. If the response falls below a threshold, it gets refined and resubmitted. The loop runs until convergence or a retry limit.

That retry limit matters. Without it, you get infinite loops. The agent keeps trying to improve output that it fundamentally can’t get right, burning tokens and time. Set a ceiling — three retries, five retries, whatever makes sense — and escalate to a human when you hit it.

We’ve observed that operations teams who add evaluation loops catch problems that would otherwise reach the end of a process and cause expensive rework. Based on hundreds of implementations, the pattern is consistent: the cost of checking work mid-process is always lower than the cost of fixing mistakes at the end.

Why these patterns matter more than the AI model

Here’s my contrarian take, and I’ll stand by it.

The model doesn’t matter as much as the pattern.

GPT-4, Claude, Gemini, Llama — pick your favorite. Any of them can follow a sequential workflow. All of them can run parallel branches. Any of them can evaluate output quality. The differentiator isn’t the brain. It’s the structure you give the brain to work within.

McKinsey’s 2025 state of AI report found that organizations succeeding with AI agents are three times more likely to fundamentally redesign workflows around AI rather than layering agents onto existing processes. They’re not just buying smarter models. They’re building better patterns.

This connects to something I keep saying: AI on top of chaos gives you turbocharged chaos.

A sequential pattern applied to a broken onboarding process just moves people through broken steps faster. A parallel pattern with poorly defined merge logic creates conflicting outputs at scale. An evaluation loop without clear quality criteria loops forever without improving anything.

The workflow pattern is the skeleton. The AI model is the muscle. You need both, but the skeleton comes first.

Combining patterns for real work

Production systems almost never use just one pattern. They mix all three.

Picture an AI agent handling employee onboarding. The overall flow is sequential — offer letter before background check, background check before IT provisioning, IT provisioning before first-day orientation. But within each major phase, there’s parallelism. IT can set up email, order equipment, and create system accounts simultaneously. And at every critical transition, there’s an evaluation loop — did the background check come back clean? Is all the equipment confirmed for delivery before we schedule the first day?

Sequential at the macro level. Parallel within phases. Evaluation loops at the gates between phases.

This layered approach is what Anthropic means by “composable patterns.” You snap them together like building blocks. The sequential pattern provides the overall structure. Parallel speeds up the parts that allow it. Evaluation loops ensure quality at the points that matter.

At Tallyfy, this composability is built into how templates work. You define the overall sequence of steps, mark which ones can run concurrently, and set conditions that act as evaluation gates. When an AI agent connects through our MCP server, it reads that template and follows the combined pattern automatically. No custom orchestration code. No prompt engineering gymnastics.

Getting started without overthinking it

I want to be blunt about something. Most teams overthink this.

They read about multi-agent architectures, swarm intelligence, hierarchical planners, and dynamic task decomposition. Then they spend six months building something that could have been three sequential steps with an evaluation check.

Andrew Ng’s advice is dead right: start with a single agent following a simple workflow. Get that working. Measure the results. Then add complexity only where the measurement shows you need it.

Here’s my practical suggestion. Pick one process in your business that:

- Runs at least weekly

- Involves more than two people

- Has clear pass/fail criteria at each step

- Currently lives in someone’s head or a document nobody reads

Map it as a sequential workflow. Run it manually through an AI agent (even just using a chat interface). See where the agent stumbles. Those stumble points tell you where you need evaluation loops. The steps where people wait unnecessarily tell you where parallelism could help.

Then formalize it. Put the workflow in a tool that the agent can read and follow — something where the process definition is machine-readable, not trapped in a PDF or a wiki page. Connect the agent through MCP. Let it run.

You’ll learn more from one real workflow running through one real agent than from months of architecture debates.

In the age of AI, defining processes matters more than ever. The companies that win won’t be the ones with the best models. They’ll be the ones with the best-defined workflows for those models to follow.

Related questions

What are AI agent workflow patterns

AI agent workflow patterns are the structural blueprints that tell an AI agent how to execute multi-step tasks. The three fundamental patterns are sequential (steps run in order, each depending on the previous), parallel (independent steps run simultaneously and merge), and evaluation loops (the agent checks its own output quality and retries or escalates if needed). Anthropic, AWS, and Andrew Ng all converge on these same patterns as the foundation for production agent systems.

Why do most AI agent projects fail

Gartner predicts over 40% of agentic AI projects will be canceled by end of 2027. The primary reasons are escalating costs, unclear business value, and inadequate workflow infrastructure. Many companies are automating processes that were already broken, and many vendors are “agent washing” — rebranding existing chatbots and RPA tools as agentic without real agentic capabilities. Gartner estimates only about 130 of the thousands of agentic AI vendors are genuine.

How does the evaluator-optimizer pattern work

The evaluator-optimizer pattern (also called the evaluation loop or reflect-refine loop) uses two components: a generator that produces output and an evaluator that assesses quality. If the output falls below defined criteria, it gets sent back to the generator with specific feedback for improvement. This loop repeats until quality meets the threshold or a retry limit is reached. AWS documents this pattern as foundational for content generation, code review, compliance checking, and any domain where output quality needs verification before proceeding.

What is the difference between workflows and agents

Workflows are systems where tasks and tools are orchestrated through predefined paths — the sequence is coded in advance. Agents are systems where the AI dynamically decides what to do next based on its reasoning. In practice, the most effective production systems combine both: defined workflow patterns that provide structure, with AI agents providing the intelligence within each step. The workflow gives the agent guardrails. The agent gives the workflow adaptability.

How does Tallyfy support AI agent workflow patterns

Tallyfy provides the workflow infrastructure that AI agents need to operate effectively. Process templates define sequential steps, parallel branches, and conditional gates (evaluation checkpoints) in a machine-readable format. Through Tallyfy’s MCP server with 40+ tools, any MCP-compatible AI agent can discover available workflows, launch processes, complete tasks, and follow defined patterns — all through natural language rather than custom code.

About the Author

Amit is the CEO of Tallyfy. He is a workflow expert and specializes in process automation and the next generation of business process management in the post-flowchart age. He has decades of consulting experience in task and workflow automation, continuous improvement (all the flavors) and AI-driven workflows for small and large companies. Amit did a Computer Science degree at the University of Bath and moved from the UK to St. Louis, MO in 2014. He loves watching American robins and their nesting behaviors!

Follow Amit on his website, LinkedIn, Facebook, Reddit, X (Twitter) or YouTube.

Automate your workflows with Tallyfy

Stop chasing status updates. Track and automate your processes in one place.